Nanoced

I have completed the migration work started back in December. As a result, this site is now entirely constructed using the Nanoc static-site generator, and the Drupal content management system has been retired.

If you’re reading this through a feed reader like Feedly, please drop me a line to let me know that the new feeds are working.

Continue reading for some thoughts on the process and on the results.

Drupal is Complicated

Drupal is an extremely successful content management system. It’s used by a significant percentage of popular web sites and it wouldn’t be contentious to say that there are a million active Drupal sites out there today. Something like 100,000 people have contributed to the software in one way or another, if you include its myriad extensions.

To attract that many deployers, many of which will be building specific experiences for their users rather than just using the basics as I was, means a lot of flexibility and a lot of built-in functionality that you’re probably not aware of. This complicated the conversion process in a number of ways.

One simple example was that, as it turns out, Drupal synthesised paths like

/comment/73 for individual comments, and paths like /taxonomy/term/73/feed

for a gratuitous RSS feed for every tag. I wasn’t aware of these, but there are

bots out there who either found them or deduced their existence. Hopefully a

few months of 410 Gone will eventually deter them.

The biggest Drupal-related issue with the conversion, though, was in the area of content layout. One of my goals for the initial migration was to be able to compare the old site with the new. The ideal was pixel-by-pixel accuracy so that I could simply switch between browser tabs; the human eye is pretty good at seeing differences when they are presented in that way.

It was obvious fairly quickly that Drupal’s menu system was going to have to be simulated in Ruby to allow Nanoc to generate equivalent output. For example, on pages like the colophon there’s a menu block on the left that:

- Only appears on pages matching particular path prefixes,

- Represents a hierarchical structure of content pages,

- Changes its appearance from one page to another to represent nodes in that tree opening and closing.

Now I have some code that does the same thing — at least to the extent that I used the facility on my site. I was free to ignore many of the features in Drupal’s menu system when writing my replacement code simply because the cases don’t come up in this site.

The most frustrating issue in this category was precise positioning of elements,

and exact replication of things like font sizes. Without both of these, the

“blink comparator” approach just didn’t work. My initial approach, which was to

lay down a conventional set of <div> elements for what looked like the

important parts of the layout, and then apply CSS styles based on inspecting

element attributes from the old site, didn’t ever quite

give the output I needed.

After a while, I started to look at what Drupal was doing in more detail and the

problem — and its inevitable solution — became clear. As an example, if

you’re reading this article on its page on the site rather than in a feed

reader, the HTML hierarchy for this text will be something like this: <html>,

<body>, <div>, <div>, <div>, <div>, <div>, <div>, <div>,

<div>, <div>, <div>, <div>, <div>, <div>, <div>, <p>. That is

fourteen levels of <div>. A significantly different but equally deep structure

is used on index pages where an extract from the same article appears. In each

case, the majority of those elements have an id attribute, or a class attribute,

or a positional relationship with another element, any of which provide a hook

for styles to be applied through CSS. Some of those <div> layers don’t

look like they are doing anything, but actually do. Some of them look like they

are doing something important, but actually don’t on this site because they are

there for something like right-to-left language support.

Much of this complexity seems to come from the way Drupal is structured as a

collection of modules, each of which contributes its own collection of <div>

wrappers and some CSS to the page. The <div>s act in part to isolate the

contribution of one module from that of the rest. There are interactions,

however, font size being one of those. Here are some extracts from Drupal’s many

CSS files:

body {

line-height: 1.5;

font-size: 87.5%;

}

.node .content {

font-size: 1.071em;

}

.node-teaser .content {

font-size: 1em;

}

.comment .content {

font-size: 0.929em;

line-height: 1.6;

}

Here, the font-size: 87.5% sets the default font size for the entire page

to a preferred 14px, down from an assumed default of 16px; The other three

definitions mean that:

- If you’re inside a

<div class="content">that’s inside a<div class="node">then the font size should be 14 × 1.071 or approximately 15px. - If you’re inside a

<div class="content">that’s inside a<div class="node-teaser"> then the font size should be 14 × 1 = 14px. - If you’re inside a

<div class="content">that’s inside a<div class="comment">then you would expect 13px because that’s roughly 14 × 0.929.

I have no idea why anyone would think to start out by reducing the default font size in a web page; perhaps these styles originated back when screens were smaller and had much lower resolution but that doesn’t explain why the real default for content almost everywhere actually ends up being 15px.

The upside of the relative sizing is that if you want to scale the whole page up a little bit then there’s one place to do it (although you may want to adjust all the other styles to make font sizes come out to nice numbers again). The downside of the epicyclic approach is that there’s no way of doing anything without it having unexpected side-effects in other parts of the page.

As I said earlier, the solution was obvious: replicate Drupal’s element hierarchy exactly, and apply exactly the same styles. Things now match perfectly.

That’s not to say that I want things to stay exactly the same now that I have made the initial conversion; I don’t. There are things about the old site that always irritated me, such as the way that an article extract always ends with a “Read more” link even if there is no more to read.

Performance

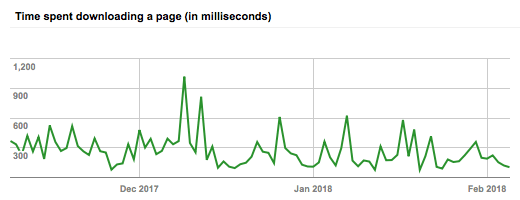

The performance of the site is, as expected, much improved. It feels far more responsive, even when the shared hosting machine is busy. Here’s a chart from Google’s webmaster tools showing how the GoogleBot sees things:

Of course the bot doesn’t pull every page on every day, so the chart is bound to be very noisy. However, if you squint a bit you can see a trend of descending peaks since I started the migration in mid-December, and since I finished a few days ago at the start of February access times have been very low indeed.

One aspect of performance I am not completely content with is that of Nanoc while building the site. This is not Nanoc’s fault; I think it’s partly to do with the site being bigger than I had realised (there are something like 850 source files) but also to do with structure. It currently takes around 58s to build the site from scratch; more than 50% of that seems to be tied up in building the menu blocks in the sidebar of every page in the blog, which can potentially be influenced by any other page on the site even though on any particular day they are all identical. For example, adding this article (the first written in February 2018) changes every blog page’s menus because every one now has a link to the Feburary 2018 index page.

If I look at the time to preview a change to a blog page, the results are even more dramatic. Nanoc includes a sophisticated dependency calculation system that allows it to optimise incremental builds, but it is defeated by these menus: what should be an almost instant preview operation takes around 10s to perform.

Because we’re examining all n pages to compute each of the n pages, we’re dealing with an O(n²) algorithm. If instead the common text could be pre-computed once and then re-used on each page, it should be possible to reduce that to O(n) and dramatically speed up both full site builds and incremental ones.

Quality

I’ve had to look at a lot of the old content on this site during the migration, as there’s a limit to how much automated conversion you can do with a database dump, some quick Perl scripts and a song in your heart. If you ever look at old work, you’re bound to think that it can be improved. I’m not really interested in improving what I have written in the past — I’d rather leave it as context for a new, revised article — but there are a lot of places where how the older work looks is a source of irritation.

I’ve mentioned elsewhere that earlier versions of some of this content were written using web editing programs like Adobe GoLive or by conversion to HTML by other means. The result is that there is a lot of embedded HTML in individual page source documents along with more modern content written using Markdown. Because Markdown processors accept most HTML, you’d think that this wouldn’t be an issue. Consider the following, however:

New hotness: this is a paragraph with "quoted text".

<p>Old and busted: this is a paragraph with "quoted text".</p>

Those are not equivalent; modern Markdown processors will apply “smart quotes” to content, but not inside embedded HTML. The result, therefore, looks like this:

New hotness: this is a paragraph with “quoted text”.

Old and busted: this is a paragraph with "quoted text".

Some very old content is even worse: it uses embedded HTML, but also presents

each quotation mark as the HTML entity ".

I am told that there are people in the world who don’t care about this kind of typographical inconsistency; alas, I am not one of those carefree souls. These things will need to be eradicated.

Nanoc comes with a couple of checks you can run on your output after compiling it. One of these throws your entire site at the W3C Markup Validation Service. Again because of my history with raw HTML via GoLive, there are a number of checks being failed there that I wasn’t previously aware of. Most of these seem to have originated with old “embed code” provided by sites like Flickr.

Nanoc’s external_links checker is also opening my eyes to the extent to which

my content has “rotted” over the years in terms of referring to things that have

disappeared or moved without leaving a forwarding address. I think it’s worth

updating those links where possible so that what I have written is still

relevant even if no longer as complete; some links will just need to be removed,

or in important cases redirected to the Wayback Machine.

Summary

The migration needed more effort than I was expecting, but not much longer in elapsed time. The new site performs as expected, although there’s some work to be done to bring my editing experience up to par. My older content needs some TLC.